In healthcare, AI output that sounds plausible isn’t enough. When payers use medical charts for downstream programs like HEDIS®, Risk Adjustment, and Utilization Management, the bar for AI accuracy is much higher.

No one in healthcare can afford “AI hallucinations”; that is, clinical concepts cannot be inferred by or generated with AI, unless they are explicitly present in the source record. Moreover, we need replicability: the same chart must produce the same output every time.

In medical record review (MRR) workflows tied to quality, risk adjustment, and compliance programs, inaccurate or inconsistent outputs can introduce compliance risk and undermine audit readiness.

This blog explains what zero-hallucination means for MRR, why generic LLMs struggle with it, and how HiLabs is designing Clinical AI for MRR that remains evidence-grounded and repeatable.

What Is Zero-Hallucination in Medical Record Review—and Why It’s Critical

Zero-hallucination in MRR means that the AI model in question never “makes up” diagnoses, medications, lab values, dates, negations, or clinical context that are not in the chart. Every output must be traceable to specific evidence in the chart—with clear linkage back to where that evidence appears in the document.

In other words, the system does not infer missing context, reinterpret ambiguous phrasing, or “complete” partially documented information. It extracts only what is explicitly present, without exception.

This matters because small deviations when interpreting clinical documentation can materially alter downstream use of the chart, leading to situations like:

- An incorrect inferred diagnosis creates Risk Adjustment exposure

- A missed negation (e.g. “no evidence of heart failure”) reverses clinical meaning

- A non-replicable output weakens audit defensibility

This is why “trustworthy AI” in healthcare is no longer an abstract principle but an operational requirement. Reliability, transparency, and governance are baseline requirements when AI outputs affect quality, risk, or compliance workflows.

Why Medical Record Review Is Especially Hard

Medical records, especially clinical charts, often include messy data. In practice, they frequently exhibit at least one of the following:

- Abbreviations, typos, and shorthand

- Multi-column PDFs, scanned faxes, and complicated tables

- Repeated headers, footers, and broken pagination

- Multiple encounters stitched into one file

At the same time, quality and compliance programs depend directly on clinical data extracted from these charts, and therefore demand consistent, auditable chart data. If the same chart produces different results across runs, trust breaks down immediately. This combination of messy inputs and strict output requirements creates a narrow margin for error.

Where Generic LLMs Fall Short

Large Language Models are powerful at generating language, but that strength is also their weakness in medical record review.

Because they are probabilistic by design, generic LLMs:

- Fill in gaps when information is unclear

- Produce non-deterministic outputs for the same input

- Struggle to guarantee traceability back to specific source evidence

That makes them risky for use cases where zero-hallucination and repeatability are mandatory, not optional. In medical record review, the deficiencies of generic LLMs are not minor trade-offs; they create unacceptable risk.

How HiLabs Achieves Zero-Hallucination and Replicability

HiLabs approaches medical record review as an evidence-based data pipeline, not a text generation application. Every step is designed to extract, normalize, and validate without inventing fictional clinical information. The system is intentionally constrained to eliminate opportunities for inference. Here’s what makes HiLabs AI different:

1. SmartOCR: Evidence-First Document Understanding

HiLabs SmartOCR ties every extracted element to the referenced location in the source document. Text, tables, and forms are reconstructed in the correct reading order, with clear provenance. That “visual grounding” ensures the system only outputs what it can point to in the source document. If an extracted element cannot be tied back to the document, it does not pass downstream.

2. CodEx: Structured Clinical Concepts Extraction, Not Free-Form Generation

Clinical concepts are extracted from the text and enriched with context—such as negation, temporality, and subject—based strictly on what is written in the chart. Key attributes—such as dosage or lab values—are captured only when explicitly present, preventing inference.

The distinction here isn’t the use of AI, but rather how it’s used and the constraints placed on it:

The system extracts exact spans of information from the chart, rather than generating probabilistic interpretations

Context is attached to the concept (e.g., “denies chest pain” ≠ “chest pain”)

Attributes are constrained to what is present in the evidence

3. Term Mapping: Closed-World Clinical Normalization

After extraction, clinical terms need to be mapped to standard ontologies (e.g., ICD-10, SNOMED CT). HiLabs Term Mapping is designed for retrieval and ranking over an immutable index, rather than free-form generation. The system selects from a fixed, pre-indexed ontology and cannot invent codes or concepts, ensuring consistency and auditability.

4. Replicability by Design

In MRR, “same input → same output” is not just a theoretical preference; it’s an operational requirement for scaling chart review workflows. Stable medical chart segmentation with preserved reading order, constrained extraction, and normalization ensures deterministic outputs suitable for production workflows, quality reporting, and compliance.

Together, these guardrails make zero-hallucination and replicability practical, not theoretical.

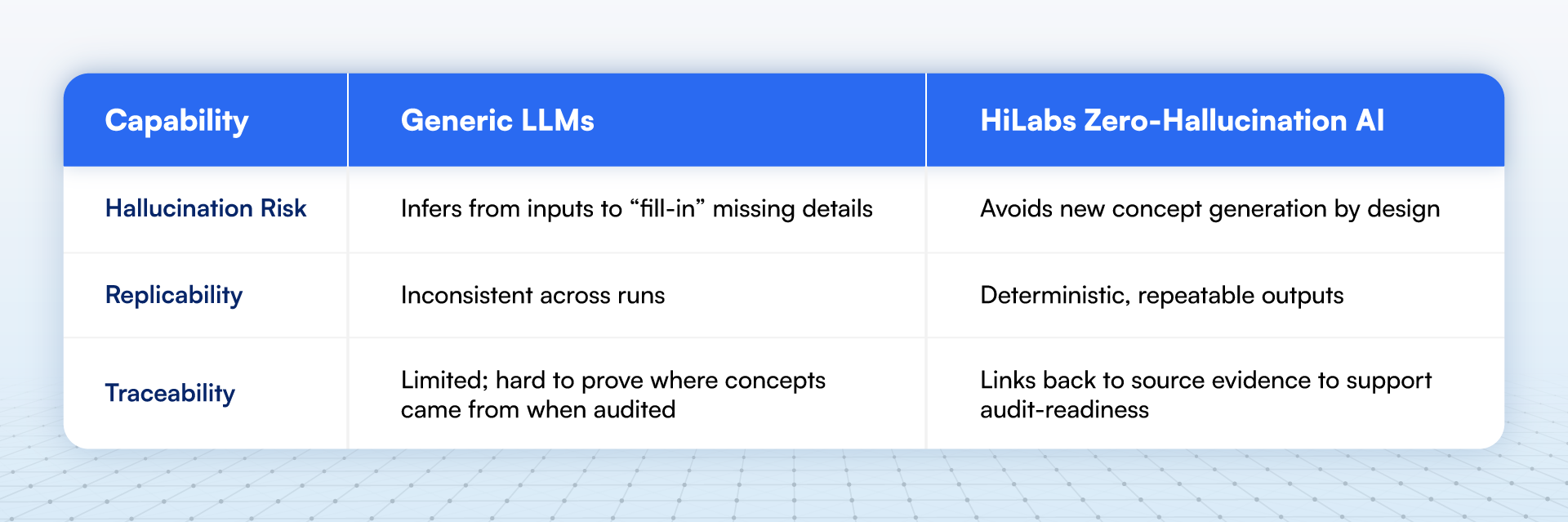

How Zero-Hallucination Differ from Generic LLM-Based Approaches

The difference between generative LLMs and a zero-hallucination architecture is structural rather than incremental.

Why Should You Adopt a Zero-Hallucination Approach for MRR in 2026

As healthcare organizations face increasing scrutiny around data quality, compliance, and AI governance, trustworthy AI is no longer a future concern; it’s a present-day requirement. Systems must be explainable, repeatable, and grounded in evidence to be viable in production clinical workflows. With proven results from AI purpose-built for MRR, health plans have no excuse for relying on inadequate and risky LLM workflows.

Conclusion

In medical record review, AI with zero-hallucination is a prerequisite for establishing confidence in outputs, to reflect only what is documented in the chart. The replicability of those AI outputs ensures that customer confidence holds across repeated runs and production-scale workflows. HiLabs AI is designed to deliver medical record reviews that are grounded in source evidence, constrained by clinical reality, and consistent by design. With HiLabs AI, hallucination-free, audit-ready, AI-based MRR is possible—not just in theory, but in real-world operations.

At HiLabs, we are dedicated to solving the most complex challenges in healthcare data. To learn more about our research and our approach to building next-generation AI for clinical data, visit our website.